After last month’s Ansible live demo, we continued Bittnet’s Decoding DevOps series with an introductory webinar on Docker, one of the most talked about technologies today. We had more than 360 participants for the live session, where our expert trainer Andrei Ciorba explained why Docker container technology is so popular, how containers work, and showed first-hand how to benefit from their efficiency and speed.

If you couldn’t attend the webinar, you can find below an overview of what Docker containers are and how to get started with using them.

Why learn about DevOps?

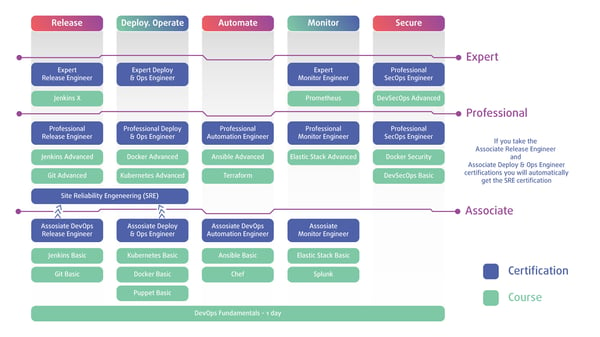

Although interest in Docker and containers has been on the rise in companies both large and small in recent years, these technologies are most often mentioned in the context of DevOps. DevOps is the way many companies today attempt to streamline their software development and implementation, by bringing together Development teams – who build code – and Operations teams – who release, deploy and ensure that the code runs on virtual or cloud infrastructures. The DevOps method employs shared practice, common processes and the pooling of knowledge between Dev and Ops professionals, bringing about true collaboration, and the much vaunted breaking down of silos.

The DevOps methodology is particularly important in the context of today’s reliance on cloud applications and cloud infrastructures, which require agility in development, continuous deployment and integration and a pervasive process of changes in operations. IT pros who want to practice DevOps, however, must become proficient in a very wide variety of tools, such as Docker, Kubernetes, Chef, Ansible and many more.

Read: Decoding DevOps – How to Use Ansible for Infrastructure Provisioning and Configuration

Getting started with Docker: What is Docker and what is a container?

To understand the fundamentals of Docker, we must first understand how containers differ from Virtual Machines. Thus, VMs can contain entire operating systems, apps and files, and run on top of a hypervisor that allocates virtual resources (CPU, RAM, etc.) to them from the physical infrastructure that hosts it. A virtual machine can therefore be managed as a standalone server.

Conversely, containers do not encapsulate an OS – instead, they run on a single operating system, directly from the physical infrastructure, and rely on kernel level mechanisms from that OS to ensure app and resource compartmentalization and security.

Docker Containers were originally designed for running a single application at a time, so, while they may sometimes be managed as you would a VM, they cannot fulfil all the use cases for virtual machines, and they should not be used to replace VMs. A docker container is meant to provide a standardized software environment for running apps, which will behave in the same way wherever it is deployed. Containers, therefore, bring the following benefits to DevOps:

1. Standardization - you should expect a container to behave in a similar manner on a variety of infrastructures.- 2. Portability – the libraries, config files, app code can be easily moved between environments (there is no OS to move and configure).

- 3. Speed – containers can be deployed and run in seconds, compared to all the time-consuming operations required by spinning up a virtual machine.

- 4. Ease of distribution – most open source applications are nowadays distributed online as containers.

- 5. Easy to manage at scale – since containers do not depend on other apps, or specific configurations or resources, to run properly. Additionally, by segmenting larger applications into smaller, more manageable and more scalable components, they have also become the building block for microservices.

Understand the basic concepts of Docker and register for the Docker Fundamentals Course

What is inside Docker?

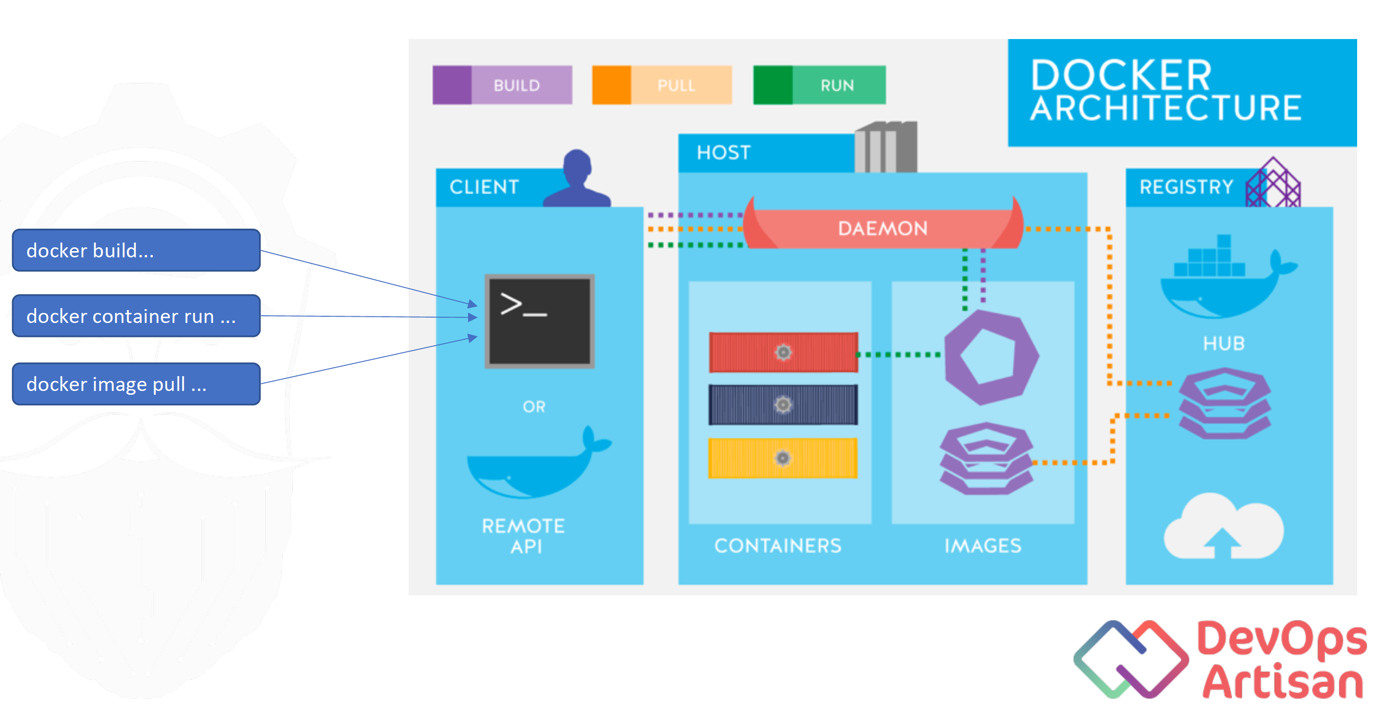

Docker Container architecture usually consists of the following elements:

- 1. Client – the program through which Docker commands are issued.

- 2. Host – the server (or “daemon”) is the element that listens for container commands coming from any client that can pass on REST API requests. That means that Docker can accept commands not only from its own client, but from many others, as well. The Docker architecture allows plugging in or even replacing its native elements with others, as needed.

- The server also manages images, networks, volumes, clusters, and so forth. The Docker host is, therefore, a server that is running a Docker server installation on top of either a Linux or Windows kernel.

- 3. Registry – this component is not, in fact, part of the Docker installation itself. It is the repository for Docker images – by default, that is the Docker Hub, a free public registry (similar to GitHub) for Docker images. The registry may also be deployed on premises, allowing for the hosting of all images in-house.

If you want to learn more about Docker, register for the Docker Advanced Course

Why is Docker so popular – and why should you use it?

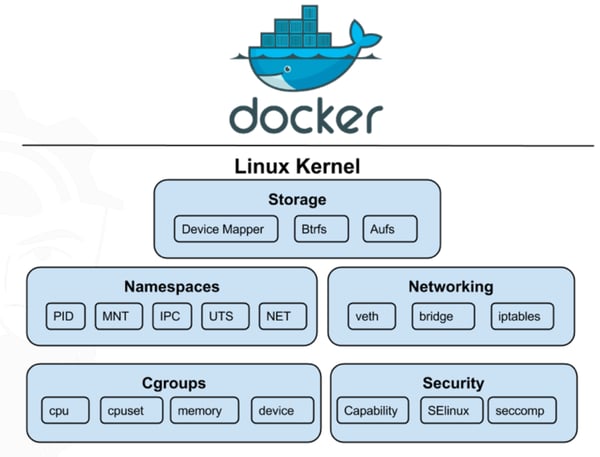

Docker is so popular because it has enabled easy access to and usage of several features that were already present in Linux kernel, but were previously untapped when it came to isolating and securing containers. Docker has in fact abstracted several layers of the Linux kernel technology and made them more user friendly.

In doing so, it has allowed for the easy creation and management of containers, without having to concern yourself with low level details. Here are some of the aspects that Docker has streamlined in order to make container creation and management more efficient.

Namespaces

Namespaces prevent naming collisions. This feature is essential for container isolation, since otherwise there is no hard separation between processes running inside the container and those running on the host (like you have on VMs). Every process started in a container gets started on the host, too, and is allocated the next available process ID (PID). To prevent naming conflicts between these process IDs, for example, namespaces assign a separate series of PIDs inside the container, completely independent from PIDs on the host, allowing each container to function independently.

Namespaces do the same for network interfaces, file systems and mount points, IPC domains, host names or users, and are therefore crucial for the separation of these elements among containers and hosts.

cgroups

Docker containers running on the same operating system will, of course, share its host’s resources (CPU, RAM, I/O, etc.). However, by default, there is nothing to prevent, for example, a single container from taking over all of the host’s resources. That’s where control groups (cgroups) come in. Docker enables you to apply cgroups to containers, not only to users or user groups. It uses this kernel feature to define resource allocation to containers, as well as define a policy of resource sharing between the various containers running on a given host.

Docker images and layered file system

Even though a layered file system is not a requirement for containers per se, Docker makes good use of this feature for efficient image and container management. To begin with, Docker considers an image to be just the minimum set of files on a disk that are needed to run an app (code, libraries, dependencies, the app itself, etc.). Images have multiple layers, that are read-only, in order to preserve the consistency of the base image.

With Docker, images are used as templates for running containers based on them, and there is no limit to the number of containers that can be spun up from a base image. Every time a container starts running from an image, the image is mounted (read-only) inside that container.

However, since that image is read-only (and shared by all the containers that rely on it), how can you make any changes to a container while it is running? The solution comes thanks to the layered file system - a thin writable layer for that image is activated in the container in question, and the container can only write to that layer (via a COW mechanism). The writable layer exists only as long as the container is live, and its contents are destroyed once the container is deactivated.

Whenever you need container image templates, feel free to explore Docker Hub.

How can you learn more about Docker?

There are other aspects of Docker that you can learn about, such as persistent storage, container networking, security, image management, compose-files, Docker Swarm and many more.

If you need more in-depth knowledge about Docker and the opportunity to put that knowledge into practice in real, live environments and scenarios, take a look at DevOps Artisan, the latest Bittnet training curriculum for the DevOps technologies that matter.